-

Notifications

You must be signed in to change notification settings - Fork 0

Expand file tree

/

Copy path02continuous.Rmd

More file actions

185 lines (139 loc) · 3.96 KB

/

02continuous.Rmd

File metadata and controls

185 lines (139 loc) · 3.96 KB

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

# Prediction from continuous outcome

```{block, type='rmdcomment'}

In this chapter, we will talk about Regression that deals with prediction of continuous outcomes. We will use multiple linear regression to build the first prediction model.

```

- Watch the video describing this chapter [](https://youtu.be/TmrG2ZbeJoY)

```{r setup02, include=FALSE}

require(tableone)

require(Publish)

require(MatchIt)

require(cobalt)

require(ggplot2)

require(caret)

```

## Read previously saved data

```{r}

ObsData <- readRDS(file = "data/rhcAnalytic.RDS")

```

## Prediction for length of stay

```{block, type='rmdcomment'}

In this section, we show the regression fitting when outcome is continuous (length of stay).

```

## Variables

```{r vars2, cache=TRUE, echo = TRUE}

baselinevars <- names(dplyr::select(ObsData,

!c(Length.of.Stay,Death)))

baselinevars

```

## Model

```{r reg2, cache=TRUE, echo = TRUE, results='hide'}

# adjust covariates

out.formula1 <- as.formula(paste("Length.of.Stay~ ",

paste(baselinevars,

collapse = "+")))

saveRDS(out.formula1, file = "data/form1.RDS")

fit1 <- lm(out.formula1, data = ObsData)

require(Publish)

adj.fit1 <- publish(fit1, digits=1)$regressionTable

```

```{r reg2a, cache=TRUE, echo = TRUE}

out.formula1

adj.fit1

```

### Design Matrix

- Notations

- n is number of observations

- p is number of covariates

Expands factors to a set of dummy variables.

```{r}

dim(ObsData)

length(attr(terms(out.formula1), "term.labels"))

```

```{r}

head(model.matrix(fit1))

dim(model.matrix(fit1))

p <- dim(model.matrix(fit1))[2] # intercept + slopes

p

```

### Obtain prediction

```{r}

obs.y <- ObsData$Length.of.Stay

summary(obs.y)

# Predict the above fit on ObsData data

pred.y1 <- predict(fit1, ObsData)

summary(pred.y1)

n <- length(pred.y1)

n

plot(obs.y,pred.y1)

lines(lowess(obs.y,pred.y1), col = "red")

```

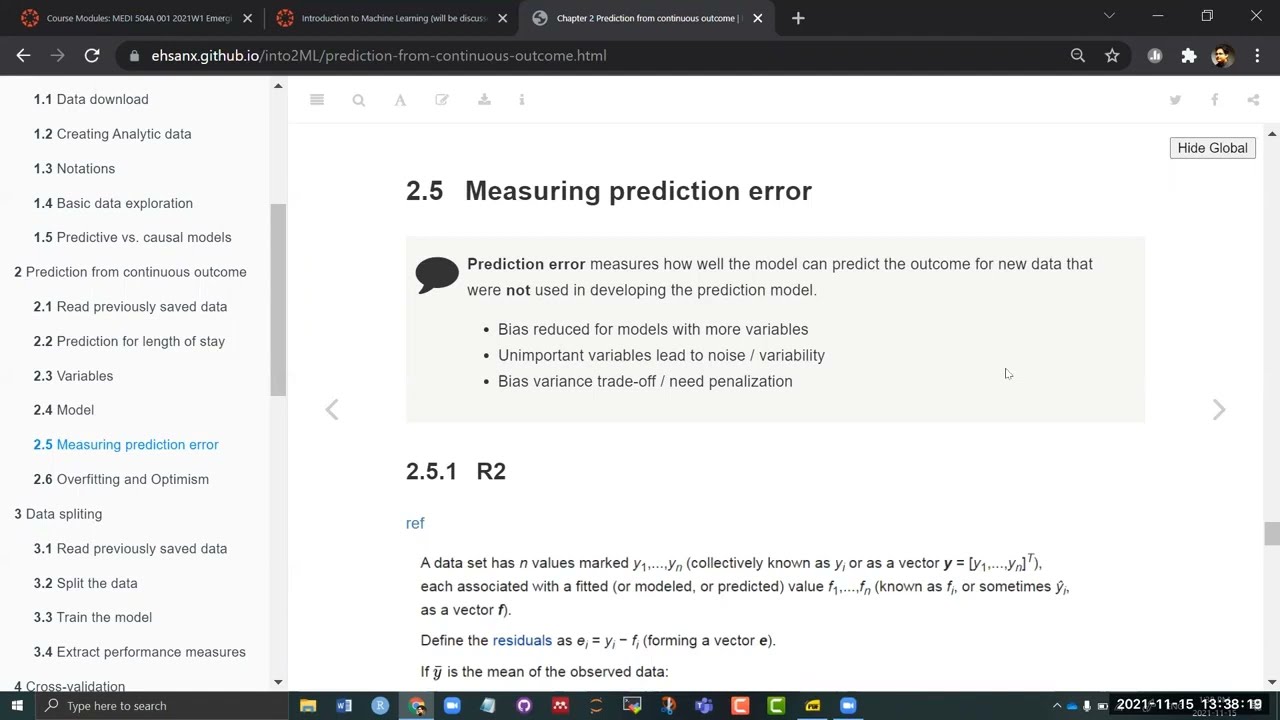

## Measuring prediction error

```{block, type='rmdcomment'}

**Prediction error** measures how well the model can predict the outcome for new data that were **not** used in developing the prediction model.

- Bias reduced for models with more variables

- Unimportant variables lead to noise / variability

- Bias variance trade-off / need penalization

```

### R2

[ref](https://en.wikipedia.org/wiki/Coefficient_of_determination)

```{r ubc8, echo=FALSE, out.width = '90%'}

knitr::include_graphics("images/r2.png")

```

```{r}

# Find SSE

SSE <- sum( (obs.y - pred.y1)^2 )

SSE

# Find SST

mean.obs.y <- mean(obs.y)

SST <- sum( (obs.y - mean.obs.y)^2 )

SST

# Find R2

R.2 <- 1- SSE/SST

R.2

require(caret)

caret::R2(pred.y1, obs.y)

```

### RMSE

[ref](https://en.wikipedia.org/wiki/One-way_analysis_of_variance)

```{r ubc89, echo=FALSE, out.width = '90%'}

knitr::include_graphics("images/rmse.png")

```

```{r}

# Find RMSE

Rmse <- sqrt(SSE/(n-p))

Rmse

caret::RMSE(pred.y1, obs.y)

```

### Adj R2

[ref](https://en.wikipedia.org/wiki/Coefficient_of_determination#Adjusted_R2)

```{r ubc899, echo=FALSE, out.width = '30%'}

knitr::include_graphics("images/r2a.png")

```

```{r}

# Find adj R2

adjR2 <- 1-(1-R.2)*((n-1)/(n-p))

adjR2

```

## Overfitting and Optimism

```{block, type='rmdcomment'}

- Model usually performs very well in the empirical data where the model was fitted in the same data (optimistic)

- Model performs poorly in the new data (generalization is not as good)

```

### Causes

- Model determined by data at hand without expert opinion

- Too many model parameters ($age$, $age^2$, $age^3$) / predictors

- Too small dataset (training) / data too noisy

### Consequences

- Overestimation of effects of predictors

- Reduction in model performance in new observations

### Proposed solutions

We generally use procedures such as

- Internal validation

- sample splitting

- cross-validation

- bootstrap

- External validation

- Temporal

- Geographical

- Different data source to calculate same variable

- Different disease